ElastiCache t4g.micro Results: Redis 7.1 and Valkey 7.2, 8.2, 9.0

The cache.t4g.micro result set currently splits into two useful signals: Valkey 7.2 leads throughput and client-side average latency, while Valkey 9.0 shows the lowest throughput variation and peak engine CPU. Redis 7.1 stays in the set as the Redis reference run.

Valkey 9.0 ElastiCache t4g.micro benchmark

Valkey 8.2 ElastiCache t4g.micro benchmark

Valkey 7.2 ElastiCache t4g.micro benchmark

Redis 7.1 ElastiCache t4g.micro benchmark

Hit Rate Fixed, Evictions Missing

After "When the Cache Never Warms Up", I went back and tuned the test itself. I wanted memtier to show some real cache hit rate during the run.

When the Cache Never Warms Up

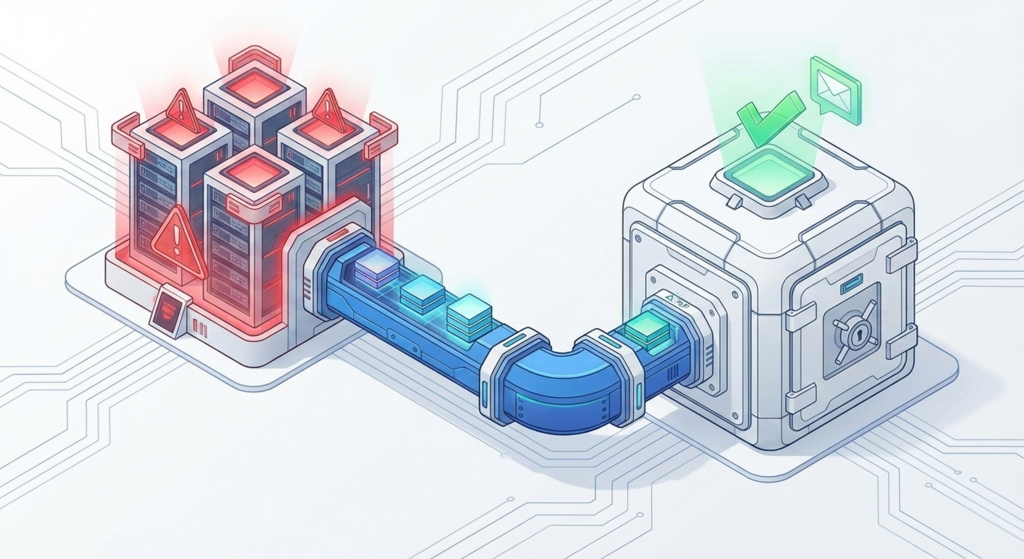

I did not want the results to live only in CloudWatch. That works for running the test, but not so well for sharing or keeping the output around.

So I added the "Reporting" branch, and the first result is shown below.

Hardening the ElastiCache Benchmark: Observable Lifecycle & Durable S3 Exports

AWS ElastiCache Lab (built on Amazon ElastiCache) is a repeatable performance harness for comparing cache configurations under controlled load. Each run is time-boxed, produces exportable artifacts, and tears down deterministically to keep both cost and comparability under control.

As the harness scales up (more Amazon ECS tasks, higher memory fill rate), the bottleneck often moves away from ElastiCache itself and toward the lifecycle boundary: shutdown, exports, and verification. The engine can change (Redis today, Valkey next), the boundary and evidence pipeline should not.

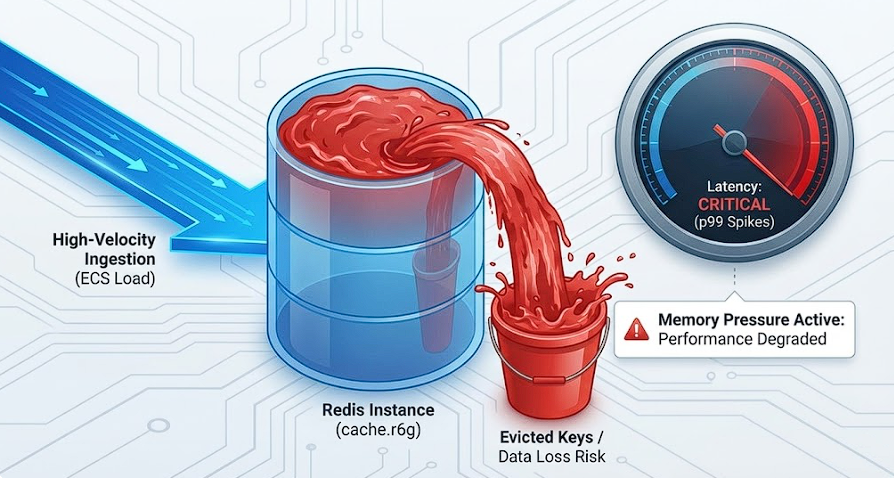

Designing for the Cliff: Calibrating Load to Reach maxmemory in a 1-Hour Run

This project has a hard contract: every run is exactly one hour. That constraint is what keeps results comparable across configurations and makes exported visualization consistent.

After I fixed shutdown reliability, the next issue wasn't infrastructure. It was methodology.

The discovery: the "happy path" trap

Reviewing telemetry from a standard run, I noticed that my default load generation (single ECS task) was not reliably pushing Redis into the state I actually care about.

Memory usage was climbing, but in many cases the run could finish without reaching maxmemory. That means the test is still useful as a smoke check, but it's not a strong performance validation: it mostly measures a cache that's not under pressure.

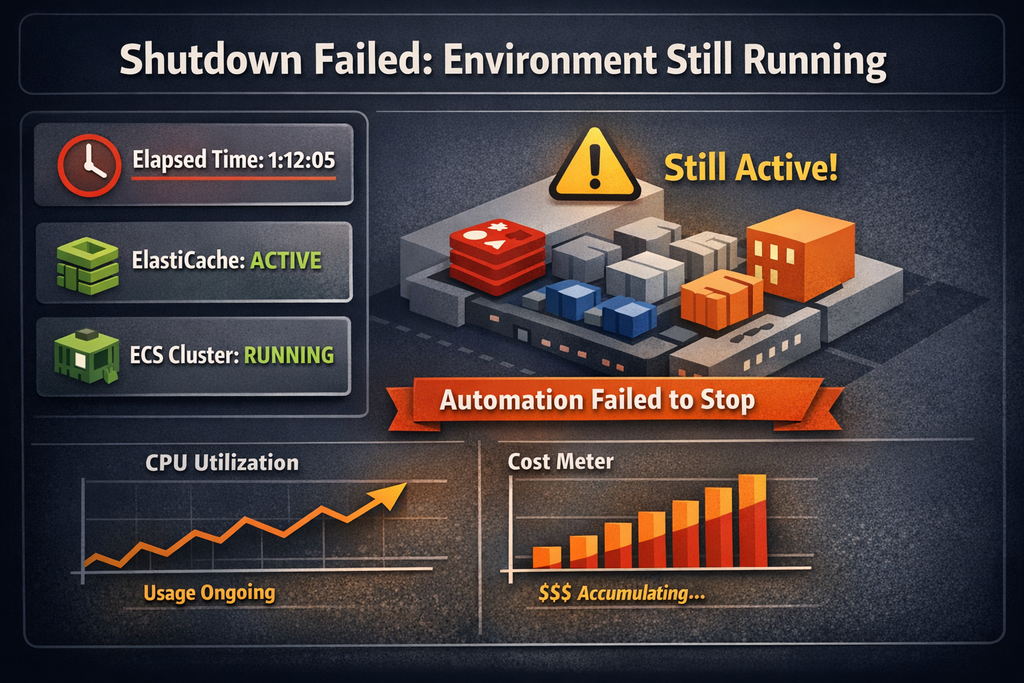

Shutdown Didn't Happen: Placeholder Semantics Bug

AWS ElastiCache Lab project has a hard rule: a test run is defined as one hour. That only stays true if the lab reliably shuts down on schedule. If it doesn't, I lose cost control and-more importantly for benchmarking-I risk starting the next run from a non-clean baseline.

I hit exactly that problem on an evening run.

What I observed

The run finished, but the environment was still up. Nothing looked "broken" in the usual sense: services were alive and responsive. In this lab, though, "still works" past the run boundary is a defect, because it means the lifecycle automation failed silently.